The "No" Button: Stopping Bad Configs Before They Wake You Up at 3 AM

DevOps Engineer by day, YAML debugger by night. I help turn “it works on my machine” into “it works in production” by automating infrastructure, building CI/CD pipelines, and keeping cloud systems happy and scalable. I enjoy breaking things safely (in staging), fixing them properly (in prod), and writing about real-world DevOps lessons—what worked, what didn’t, and what I wish I knew earlier. If it involves Docker, Kubernetes, or reducing pager alerts, I’m probably interested.

You get a Slack message at 8 PM. A critical production service is down. After an hour of frantic debugging, you find the cause: a developer deployed a new version without resource limits, and the pod went rogue, triggering a cascading failure. We've all been there. Managing multiple Kubernetes clusters and development teams can feel like a constant battle against inconsistency and overlooked best practices.

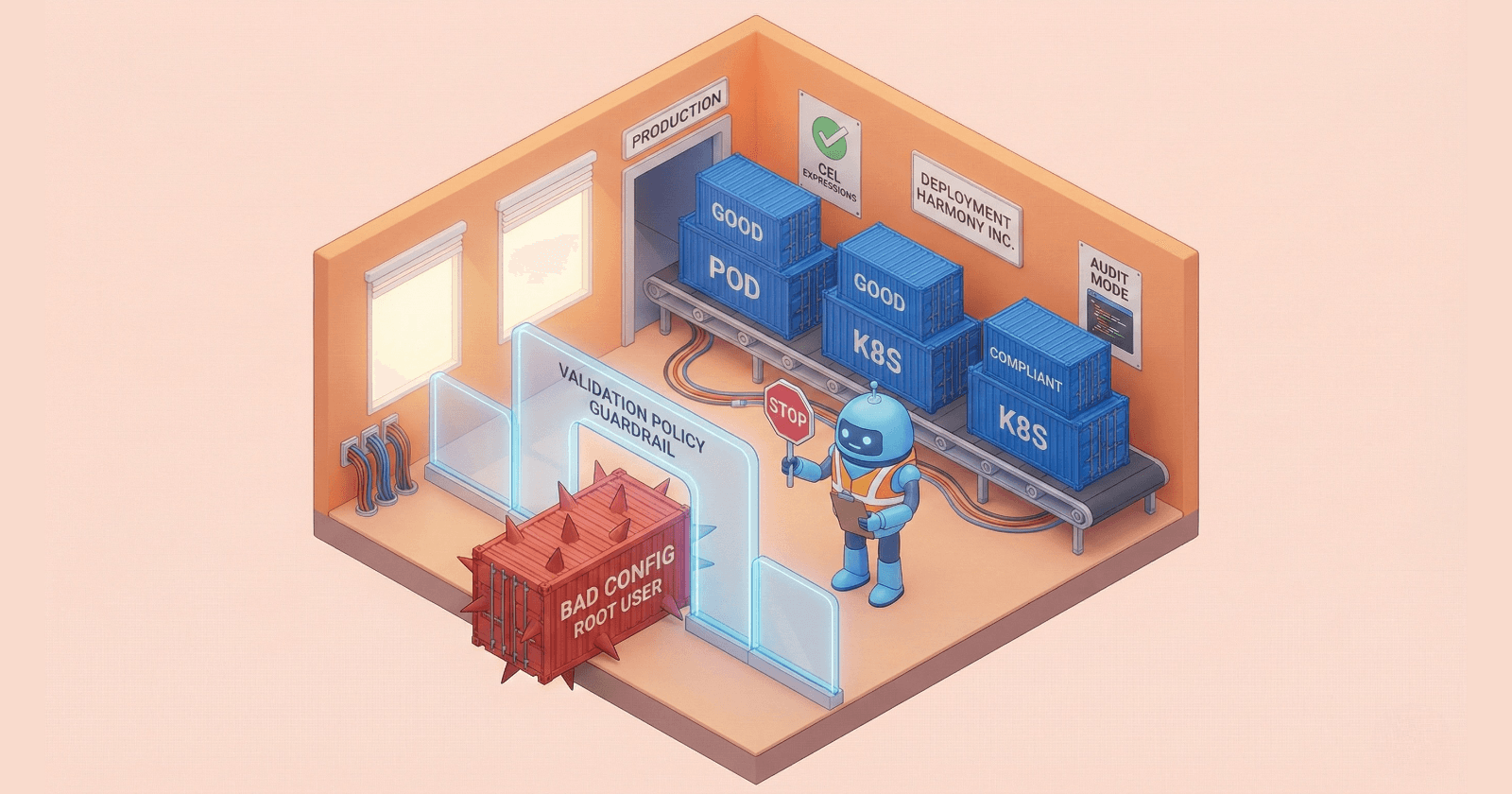

In the previous two articles in this series, we covered the foundations of policy-as-code and explored the magic of mutation policies for automatically correcting configurations. Now, we turn our attention to the most powerful tool in the policy engine arsenal: Validation Policies. This article will focus on the most impactful and perhaps counter-intuitive uses of Validation Policies with Kyverno, presenting them not as a tool for enforcement, but for empowerment.

Guardrails, not Gates: Helping Devs Without Being a Blocker

The most effective policy strategy isn't about building gates that simply block developers when they do something wrong. It's about creating "guardrails" that gently guide them toward best practices, making the right way the easy way.

This philosophy is where Kyverno’s simplicity shines. Unlike tools that require learning a specialized language like Rego, Kyverno uses simple, declarative YAML for policy definitions. This approachability makes it easier for platform and development teams to collaborate on policy creation and maintenance. Instead of a complex, opaque language owned by a central team, policies become shared artifacts that everyone can understand and contribute to.

A great analogy for this is a validated drop-down list in a web form. The dropdown is a preventive control; it stops you from entering bad data from the very start. But it does so in a helpful, intuitive way by showing you the valid options. A good Kyverno policy works the same way. It prevents a misconfigured resource from ever entering the cluster, but when paired with clear error messages, it teaches the developer what needs to be fixed.

This turns policy management from a confrontational process into a collaborative one, improving both security posture and developer velocity. This isn't just about developer happiness; it's about shipping more secure code, faster, by shrinking the feedback loop from days (in a security review) to seconds (at kubectl apply).

The "Root" of all Evil: Why We Block Root Users

One of the most fundamental Kubernetes security best practices is to prevent containers from running as the root user. Allowing root access inside a container is a major security risk, opening the door to privilege escalation attacks if a vulnerability is exploited. Enforcing this rule manually across hundreds or thousands of workloads is impossible.

This is a perfect use case for a preventive validation policy. With a few lines of YAML, you can create a cluster-wide rule that automatically blocks any pod that attempts to run as root.

Here is a simple Kyverno ClusterPolicy that uses a Common Expression Language (CEL) expression to validate that pods are configured to run as a non-root user:

apiVersion: kyverno.io/v1

kind: ClusterPolicy

metadata:

name: disallow-root-user

spec:

validationFailureAction: Enforce

rules:

- name: validate-run-as-non-root

match:

resources:

kinds:

- Pod

validate:

cel:

expression: "object.spec.securityContext.runAsNonRoot == true"

This simple, declarative rule is incredibly powerful. It enforces a critical security standard across the entire cluster without any manual intervention. It's a classic example of a powerful preventive control that eliminates an entire class of security risks before a workload is even scheduled.

Audit Mode: How to Test Rules Without Causing a Mass Revolt

A common pitfall I see is platform teams rolling out a new, strict policy that immediately breaks existing workflows or blocks a critical production deployment. This is the moment where the platform team is seen as a blocker, causing friction and frustration. How do you enforce standards without causing a developer revolt?

This is where Kyverno’s distinction between preventive (Enforce) and detective (Audit) controls becomes a lifesaver.

• Enforce Mode: This is a preventive control. It actively blocks any resource that violates the policy before it can be created or updated in the cluster.

• Audit Mode: This is a detective control. It allows non-compliant resources to be created but flags them for review by generating policy reports.

This dual-mode capability allows for a safe, gradual rollout strategy. As Adevinta's engineering team noted in their tech blog on their transition to Kyverno:

"In audit mode, Kyverno will not reject any request but instead will produce a resource inside the cluster called ’admissionreport.kyverno.io’ and also ‘policyreport.kyverno.io’."

This enables a practical and low-stress workflow for introducing new policies:

1. Deploy in Audit Mode: Initially, deploy all new policies with validationFailureAction: Audit.

2. Monitor Reports: Observe the generated policy reports to see which existing workloads are non-compliant and understand the real-world impact of the new rule.

3. Collaborate and Remediate: Work with the relevant development teams to help them fix their configurations based on the audit data.

4. Switch to Enforce Mode: Once you’ve confirmed that all critical workloads are compliant, you can confidently switch the policy to validationFailureAction: Enforce, knowing it won't cause unexpected disruptions.

This feature transforms policy implementation from a high-risk, all-or-nothing event into a gradual, data-driven process that builds trust between platform and development teams.

CEL Expressions: It’s Like Excel Formulas, but for Kubernetes Security

One of the game-changing features in modern Kubernetes policy enforcement is the Common Expression Language (CEL). CEL provides a standardized, declarative way to write validation logic directly into Kubernetes resources. This is a significant shift: for many common validation rules, you may no longer need a separate policy engine like Gatekeeper or even Kyverno's own pattern-matching engine; you can use the native capabilities that Kubernetes now provides. And because Kyverno offers first-class support for this standard, it gives you an incredibly powerful toolset.

Think of CEL expressions like writing formulas in a spreadsheet. You're given an object (the Kubernetes resource YAML being submitted) and you can write simple, powerful expressions to inspect its fields and validate its contents.

Here are a few common examples of what you can do with CEL:

• Checking for a specific label: object.metadata.labels.team == 'platform' This simple check ensures a resource is correctly attributed to the platform team.

• Ensuring all containers have CPU limits:

• This prevents runaway processes from starving other workloads and causing cluster-wide instability.

• Requiring at least one annotation: has(object.metadata.annotations) && object.metadata.annotations.size() > 0 This is useful for ensuring resources have the necessary metadata for monitoring or automation tools.

• Validating an image registry using regex: object.spec.containers.all(container, container.image.matches('^my-trusted-registry.io/.*')) This is a critical supply-chain security control, ensuring that only vetted images from your organization's registry can run in the cluster.

With CEL, you get a simple yet incredibly powerful way to write complex, fine-grained validation rules directly in your Kyverno policies, making them more expressive and easier to maintain.

Real Talk: How to Gently Force People to Use Labels

A common problem in any large cloud or Kubernetes environment is inconsistent or missing labels. Without proper tagging, it becomes nearly impossible to answer basic questions like, "How much does this application cost?" or "Who owns this service?" This lack of metadata hygiene leads to operational blind spots and makes cost allocation a nightmare.

The solution is to define a tagging standard and then enforce it with a validation policy. First, decide on a set of required labels (e.g., app, owner, cost-center). Second, create a Kyverno policy to ensure every new resource has them.

This ClusterPolicy uses CEL to validate that all new Deployments contain the required labels:

apiVersion: kyverno.io/v1

kind: ClusterPolicy

metadata:

name: require-standard-labels

spec:

validationFailureAction: Enforce

rules:

- name: check-for-standard-labels

match:

resources:

kinds:

- Deployment

validate:

cel:

expression: "has(object.metadata.labels) && ['app', 'owner', 'cost-center'].all(key, key in object.metadata.labels)"

A policy like this is the perfect candidate for the "Audit-then-Enforce" strategy. You can start by deploying it in Audit mode to discover which teams need to update their deployment manifests. This approach prevents the developer revolt that often happens when governance teams try to enforce tagging standards retroactively. It's about learning from the common mistakes seen in other ecosystems (like Azure) and applying a more intelligent, DevOps-native solution. This is the "gentle" part of the enforcement. Once you've given teams time to comply, you can switch to Enforce mode.

The payoff is immense: operational blind spots are eliminated, 'who owns this?' becomes a solved problem. Finance teams can finally get the clear cost allocation reports they've been asking for. This isn't just about hygiene; it's about running Kubernetes like a business.

Conclusion

Kyverno's validation policies are more than just a security tool; they are a flexible, powerful, and developer-friendly way to bring order and sanity to your Kubernetes clusters. By acting as helpful guardrails, offering a safe audit mode for rollouts, leveraging the power of CEL, and enforcing operational best practices like tagging, you can build a more secure, reliable, and collaborative platform.

To leave you with a final thought: What is the one small, unenforced standard in your cluster that, if automated with a policy, would have the biggest positive impact?

📚 Recommended Reading & Resources

The "Real World" Story Scaling Policy Enforcement: Lessons from Adevinta’s Migration. The engineering blog post is referenced in this article. A must-read for understanding the operational side of moving to Kyverno and the memory/performance benefits observed at scale.

The Sandbox Kyverno Policy Playground. Don't test in production! Use this interactive playground to write, test, and debug your validation policies and CEL expressions directly in your browser before applying them to your cluster.

The Official Guide Kyverno Validation Rules Documentation: The complete reference for writing validation policies, including deep dives on patterns, anchors, and failure actions.

The Deep Dive Kubernetes Common Expression Language (CEL) Reference: Everything you need to know about the syntax and capabilities of CEL within Kubernetes. Bookmark this for when you need to write complex logic that goes beyond simple pattern matching.

The "Why" CEL-ebrating Simplicity: Mastering Kubernetes Policy Enforcement. A great overview from the CNCF blog on why the industry is shifting toward CEL and how it simplifies the policy landscape for platform engineers.